#include <Layer.h>

Public Member Functions | |

| Layer (const int in_size, const int out_size) | |

| virtual | ~Layer () |

| int | in_size () const |

| int | out_size () const |

| virtual void | init (const Scalar &mu, const Scalar &sigma, RNG &rng)=0 |

| virtual void | forward (const Matrix &prev_layer_data)=0 |

| virtual const Matrix & | output () const =0 |

| virtual void | backprop (const Matrix &prev_layer_data, const Matrix &next_layer_data)=0 |

| virtual const Matrix & | backprop_data () const =0 |

| virtual void | update (Optimizer &opt)=0 |

| virtual std::vector< Scalar > | get_parameters () const =0 |

| virtual void | set_parameters (const std::vector< Scalar > ¶m) |

| virtual std::vector< Scalar > | get_derivatives () const =0 |

Detailed Description

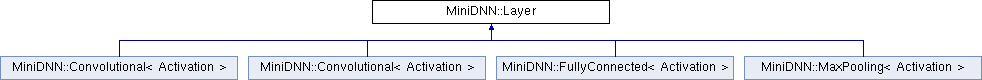

The interface of hidden layers in a neural network. It defines some common operations of hidden layers such as initialization, forward and backward propogation, and also functions to get/set parameters of the layer.

Constructor & Destructor Documentation

◆ Layer()

|

inline |

Constructor

- Parameters

-

in_size Number of input units of this hidden Layer. It must be equal to the number of output units of the previous layer. out_size Number of output units of this hidden layer. It must be equal to the number of input units of the next layer.

◆ ~Layer()

|

inlinevirtual |

Member Function Documentation

◆ in_size()

|

inline |

◆ out_size()

|

inline |

◆ init()

|

pure virtual |

Initialize layer parameters using \(N(\mu, \sigma^2)\) distribution

- Parameters

-

mu Mean of the normal distribution. sigma Standard deviation of the normal distribution. rng The random number generator of type RNG.

Implemented in MiniDNN::Convolutional< Activation >, MiniDNN::Convolutional< Activation >, MiniDNN::MaxPooling< Activation >, and MiniDNN::FullyConnected< Activation >.

◆ forward()

|

pure virtual |

Compute the output of this layer

The purpose of this function is to let the hidden layer compute information that will be passed to the next layer as the input. The concrete behavior of this function is subject to the implementation, with the only requirement that after calling this function, the Layer::output() member function will return a reference to the output values.

- Parameters

-

prev_layer_data The output of previous layer, which is also the input of this layer. prev_layer_datashould havein_sizerows as in the constructor, and each column ofprev_layer_datais an observation.

Implemented in MiniDNN::Convolutional< Activation >, MiniDNN::Convolutional< Activation >, MiniDNN::MaxPooling< Activation >, and MiniDNN::FullyConnected< Activation >.

◆ output()

|

pure virtual |

Obtain the output values of this layer

This function is assumed to be called after Layer::forward() in each iteration. The output are the values of output hidden units after applying activation function. The main usage of this function is to provide the prev_layer_data parameter in Layer::forward() of the next layer.

- Returns

- A reference to the matrix that contains the output values. The matrix should have

out_sizerows as in the constructor, and have number of columns equal to that ofprev_layer_datain the Layer::forward() function. Each column represents an observation.

Implemented in MiniDNN::Convolutional< Activation >, MiniDNN::Convolutional< Activation >, MiniDNN::MaxPooling< Activation >, and MiniDNN::FullyConnected< Activation >.

◆ backprop()

|

pure virtual |

Compute the gradients of parameters and input units using back-propagation

The purpose of this function is to compute the gradient of input units, which can be retrieved by Layer::backprop_data(), and the gradient of layer parameters, which could later be used by the Layer::update() function.

- Parameters

-

prev_layer_data The output of previous layer, which is also the input of this layer. prev_layer_datashould havein_sizerows as in the constructor, and each column ofprev_layer_datais an observation.next_layer_data The gradients of the input units of the next layer, which is also the gradients of the output units of this layer. next_layer_datashould haveout_sizerows as in the constructor, and the same number of columns asprev_layer_data.

Implemented in MiniDNN::Convolutional< Activation >, MiniDNN::Convolutional< Activation >, MiniDNN::MaxPooling< Activation >, and MiniDNN::FullyConnected< Activation >.

◆ backprop_data()

|

pure virtual |

Obtain the gradient of input units of this layer

This function provides the next_layer_data parameter in Layer::backprop() of the previous layer, since the derivative of the input of this layer is also the derivative of the output of previous layer.

Implemented in MiniDNN::Convolutional< Activation >, MiniDNN::Convolutional< Activation >, MiniDNN::MaxPooling< Activation >, and MiniDNN::FullyConnected< Activation >.

◆ update()

|

pure virtual |

Update parameters after back-propagation

- Parameters

-

opt The optimization algorithm to be used. See the Optimizer class.

Implemented in MiniDNN::Convolutional< Activation >, MiniDNN::Convolutional< Activation >, MiniDNN::MaxPooling< Activation >, and MiniDNN::FullyConnected< Activation >.

◆ get_parameters()

|

pure virtual |

Get serialized values of parameters

Implemented in MiniDNN::Convolutional< Activation >, MiniDNN::Convolutional< Activation >, MiniDNN::MaxPooling< Activation >, and MiniDNN::FullyConnected< Activation >.

◆ set_parameters()

|

inlinevirtual |

Set the values of layer parameters from serialized data

Reimplemented in MiniDNN::Convolutional< Activation >, MiniDNN::Convolutional< Activation >, MiniDNN::MaxPooling< Activation >, and MiniDNN::FullyConnected< Activation >.

◆ get_derivatives()

|

pure virtual |

Get serialized values of the gradient of parameters

Implemented in MiniDNN::Convolutional< Activation >, MiniDNN::Convolutional< Activation >, MiniDNN::MaxPooling< Activation >, and MiniDNN::FullyConnected< Activation >.

The documentation for this class was generated from the following file: